AI Art Malware Threats: The Trojan Horse in NFTs

- Shilpi Mondal

- 4 days ago

- 5 min read

Updated: 3 days ago

SHILPI MONDAL| DATE: MARCH 23, 2026

Beyond the Surface: The Rise of Polyglot Masterpieces

For years, we viewed image files as "passive" data. You open a JPEG, you see a picture, and that’s the end of it. But the rise of AI Art Malware Threats is changing that assumption entirely. Hackers are now utilizing polyglot engineering to turn these benign files into multi-functional weapons.utilising A polyglot is a single file that remains valid in two or more formats simultaneously. According to research shared via OpenReview, a file can appear as a perfect PNG to a viewer while functioning as a malicious Java Archive (JAR) to a server. This isn’t just basic steganography—the kind of pixel-tweaking used in the 2011 Duqu campaign. This is structural deception and a core driver behind modern AI Art Malware Threats, where files appear harmless but execute hidden payloads.,

This isn’t just basic steganography the kind of pixel-tweaking used in the 2011 Duqu campaign. This is structural deception. Because traditional scanners often rely on "magic numbers" (the first few bytes of a file) to identify a format, a polyglot can easily slip past filters by wearing two hats at once. Menlo Security notes that standard antivirus tools often overlook these changes because they don't inspect the internal bitmap integrity, leaving the door wide open for Cross-Site Scripting (XSS) or remote code execution (RCE).

The Serialization Crisis: When AI Models "Bite" Back

The real nightmare for IT leaders, however, isn't just the images it’s the AI models that create them. We are currently facing a "serialization crisis" within the AI supply chain. Most PyTorch weights and Scikit-Learn pipelines rely on Python’s pickle module for storage. Here’s the catch: Cloudsmith explains that the pickle protocol is actually a stack-based virtual machine. The real nightmare for IT leaders, however, isn't just the images—it’s the AI models that create them. AI Art Malware Threats now extend into the AI supply chain, where serialized model files can execute malicious code during loading.

When you "unpickle" a model loading it into your environment you're essentially running a sequence of opcodes. That's an opening a malicious actor can exploit: with a carefully crafted model file, they can instruct your system to fire off dangerous functions like os.system() or eval() the instant you call torch.load(). And it's more common than you'd think recent research flagged by arXiv found that 95% of malicious models circulating on repositories like Hugging Face are pickle-based.

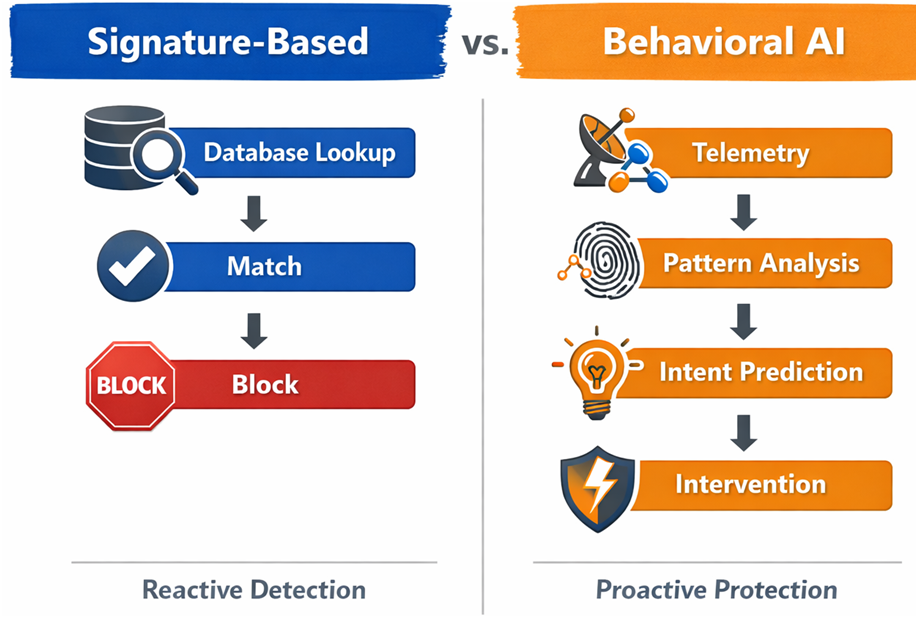

Impact Callout: Traditional signature-based scanners like ClamAV have a near 0% detection rate for these evasive serialization exploits.

NFTs: A "Target-Rich" Environment for Drainware

As we look at the blockchain side of the house, the vulnerability shifts from the file to the contract. The NFT market has become what EurekAlert! describes as a "target-rich environment" where innovation has outpaced security maturity. As we look at the blockchain side of the house, the vulnerability shifts from the file to the contract. AI Art Malware Threats are also emerging in NFT ecosystems, where drainware and metadata manipulation expose users to asset theft.

One of the most dangerous tools used by hackers is “drainware,” which refers to malicious smart contracts created to empty a user’s digital wallet. These attacks often rely on social engineering techniques to trick users into granting permissions through functions like setApprovalForAll. While this function was originally designed to help marketplaces manage asset transfers, attackers can exploit it to gain complete control over a victim’s digital assets.

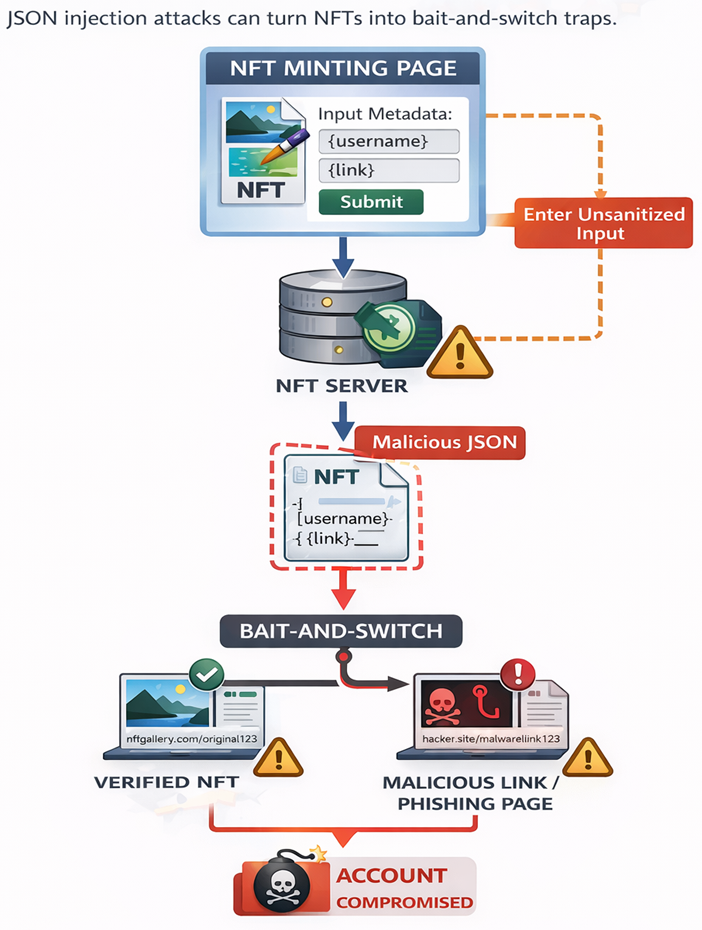

Furthermore, because storing high-res art on-chain is expensive, most NFTs point to external metadata. Tencent Cloud warns that if this metadata isn't sanitized, attackers can use "JSON Injection" to perform a bait-and-switch, replacing your verified asset with a malicious link or a phishing page after the purchase is complete.

Poisoning the Well: The "250-Document" Rule

The threat isn't just about stealing assets; it's about corrupting the very intelligence your business relies on. We’ve seen a rise in "data poisoning," where attackers insert malicious samples into training sets to create backdoors. We’ve seen a rise in "data poisoning," where attackers insert malicious samples into training sets. This tactic is becoming a critical component of AI Art Malware Threats, enabling hidden backdoors in enterprise AI systems.

A joint study by Anthropic and the Alan Turing Institute revealed a terrifyingly low barrier to entry: it takes as few as 250 poisoned documents to backdoor an AI model, regardless of its size. Whether the model has 1 billion parameters or 13 billion, that tiny fraction of "bad data" is all it takes to make the model misbehave when it encounters a specific trigger. In a corporate environment, this could mean an LLM quietly exfiltrating data the moment it sees a specific "sudo" command or a diagnostic tool that conveniently fails to flag risks in certain demographics.

The 2026 Shift: Agentic Predator Swarms

As we move deeper into 2026, we are witnessing the arrival of "Agentic AI" attacks. According to SecurityWeek, we are moving past static malware toward "vibe-hacking" using GenAI to mimic human behavior so perfectly that traditional phishing filters become useless.

Lumu.io predicts that by the end of this year, we will see the first major enterprise breach orchestrated by a fully autonomous AI agent. These "predator swarms" can automate the entire attack lifecycle: detecting a vulnerability, writing the exploit, and delivering the payload across thousands of endpoints in under a minute. The reality is that as long as we treat data and code as separate entities, we will remain vulnerable. AI Art Malware Threats highlight the urgent need for a Zero Trust approach to every pixel, model weight, and smart contract.

Defensive Engineering: How to Fight Back

The "Wild West" era of digital art and AI doesn't mean we should retreat; it means we must build better fences. At IronQlad, we advocate for a multi-layered defensive posture that treats every digital artifact as a potential threat.

Deploy Deep CDR (Content Disarmament and Reconstruction): Don't just scan images; reconstruct them. Tools like Stegoslayer decode pixel values and regenerate the image from scratch, stripping away hidden scripts and unauthorized metadata while keeping the visual quality intact.

Adopt "Safe" Serialization: Transition your AI workflows away from pickle and toward data-only formats like safetensors. If you must use legacy formats, use ML-based scanners like SafePickle that analyze opcode distribution rather than just looking for keywords.

Secure Your Metadata: To prevent bait-and-switch attacks, ensure your NFT metadata is stored on decentralized, immutable storage like Arweave or IPFS using version 1 Content Identifiers (CIDs).

Transaction Simulation: Before signing any smart contract interaction, use tools that simulate the transaction. This allows you to see exactly what permissions you are granting before a single Wei leaves your wallet.

The reality is that as long as we treat data and code as separate entities, we will remain vulnerable. The future belongs to those who adopt a "Zero Trust" model for every pixel, every model weight, and every smart contract.

Explore how IronQlad can support your journey toward a more resilient, AI-ready security posture by contacting our cybersecurity consultants today.

KEY TAKEAWAYS

Polyglots bypass filters: Malicious files can now hide executable code (like JAR or JS) inside standard image headers (JPEG/PNG), evading "magic number" detection.

The 250-sample threshold: AI models are surprisingly fragile; as few as 250 poisoned data points can implant a permanent backdoor in a massive LLM.

Pickle is a liability: The standard format for AI weights is inherently unsafe; loading a model is equivalent to executing code.

Metadata is the weak link: NFT security often fails at the off-chain level through JSON injection and SVG-based XSS attacks.

Comments